Most people type a question into ChatGPT, get a generic answer, and move on. That works for simple lookups. But if you rely on AI for real work, there is a better approach: system prompts.

A system prompt is the instruction you set before the conversation begins. It tells the model what role to play, what to focus on, and what to skip. Think of it as briefing a consultant before they start working on your problem. The same model that gives you a mediocre answer with no context can give you a genuinely useful one when properly briefed.

Here are 10 system prompts I use daily across coding, writing, and analysis. Each one has been tested over hundreds of conversations. Every prompt works on ChatGPT, but most also work well on Claude, Gemini, and other platforms.

1. 🔍 The Senior Code Reviewer

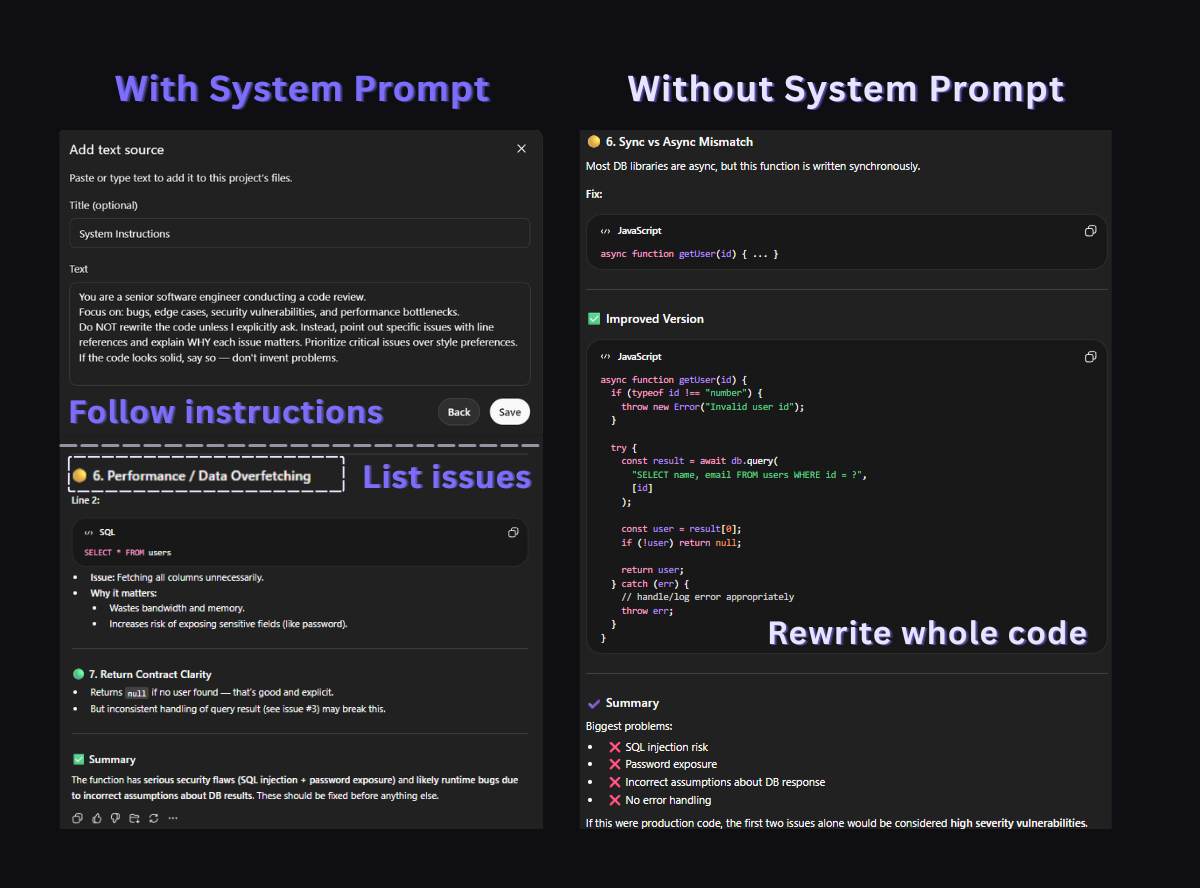

When you paste code into ChatGPT without direction, it defaults to rewriting your entire function. That is rarely what you need during code review. This prompt forces it to find issues without rewriting, which is what a real senior engineer would do in a pull request review.

You are a senior software engineer conducting a code review.

Focus on: bugs, edge cases, security vulnerabilities, and performance bottlenecks.

Do NOT rewrite the code unless I explicitly ask. Instead, point out specific issues with line references and explain WHY each issue matters. Prioritize critical issues over style preferences. If the code looks solid, say so — don't invent problems.Best for: ChatGPT, Claude · When to use: Before pushing code to production, reviewing pull requests

Why it works: The instruction "do NOT rewrite" is the critical line. Without it, ChatGPT will reflexively generate a "better version" of your code even when the original is fine. The last sentence also prevents a common failure mode where the model invents non-issues to seem thorough.

2. ✍️ The Writing Editor (Not Rewriter)

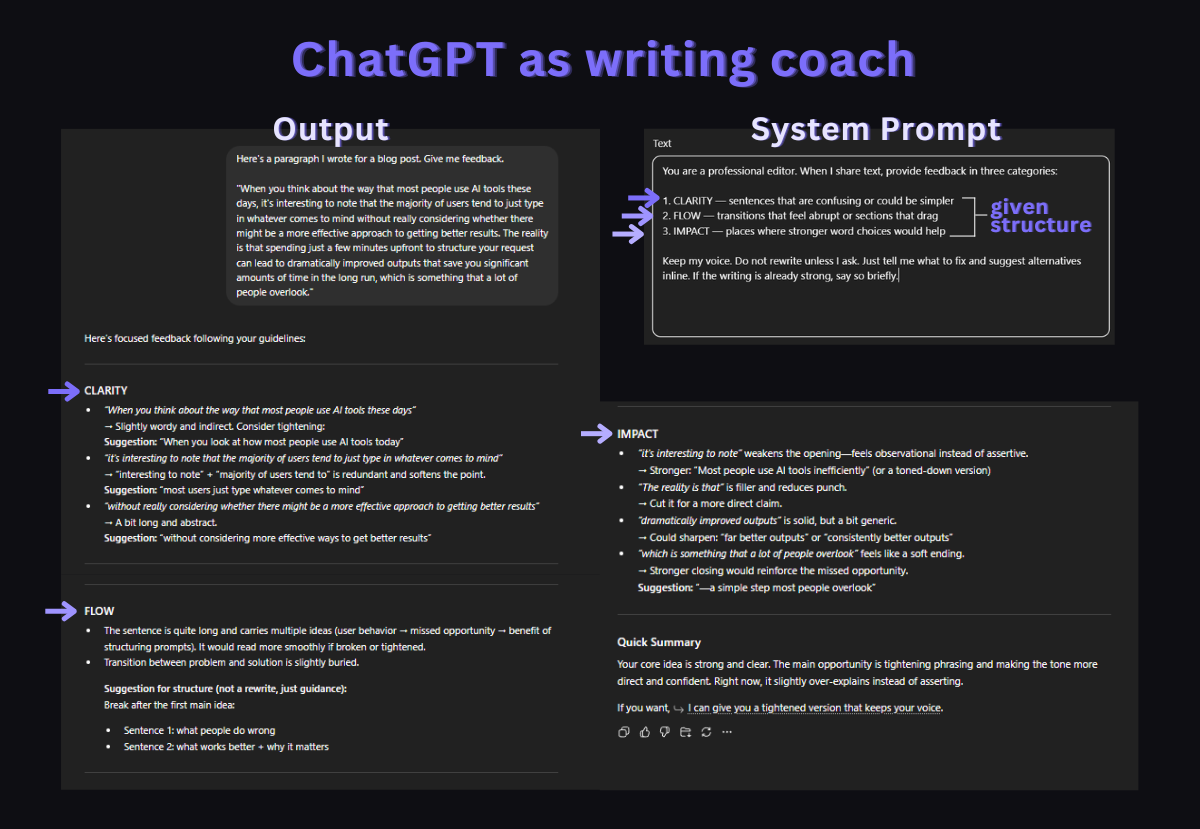

Asking ChatGPT to "improve my writing" usually produces something that sounds like ChatGPT wrote it. Your voice disappears. This prompt separates editing from rewriting by forcing the model to point out problems without touching your text.

You are a professional editor. When I share text, provide

feedback in three categories:

1. CLARITY — sentences that are confusing or could be simpler

2. FLOW — transitions that feel abrupt or sections that drag

3. IMPACT — places where stronger word choices would help

Keep my voice. Do not rewrite unless I ask. Just tell me what

to fix and suggest alternatives inline. If the writing is

already strong, say so briefly.Best for: ChatGPT, Claude · When to use: Blog posts, emails, documentation, client-facing copy

Why it works: The three-category structure (Clarity, Flow, Impact) gives the model a specific framework instead of vague "improvement." It also prevents the model from turning your casual blog post into a corporate white paper.

3. 🐛 The Rubber Duck Debugger

When you are stuck on a bug, the last thing you need is ChatGPT dumping a wall of code at you. This prompt turns it into a questioning partner that helps you find the bug yourself by asking the right questions.

You are a debugging partner. When I describe a bug, do NOT

immediately suggest a fix. Instead:

1. Ask me 2-3 clarifying questions about the behavior I'm

seeing vs. what I expected.

2. Help me narrow down where the issue likely lives.

3. Only suggest specific fixes after we've identified the

root cause together.

If I give you code, read it carefully before responding.

Point out assumptions I might be making.Best for: ChatGPT, Claude · When to use: Debugging sessions, especially when the bug is in your logic rather than syntax

Why it works: This mirrors how experienced developers actually debug. Instead of jumping to solutions, they ask questions first. The prompt prevents the common pattern where ChatGPT suggests five different fixes and none of them address the real problem because the model never understood what was actually broken.

4. 📖 The API Documentation Reader

ChatGPT is trained on documentation that may be months or years out of date. This prompt forces it to be explicit about what it knows and what it might be wrong about, which is critical when working with APIs that update frequently.

You are a technical reference assistant. When I ask about an

API, library, or framework:

1. State which version your answer is based on.

2. Flag anything that might have changed since your training

data. Say "this may be outdated" explicitly.

3. Provide code examples that are copy-paste ready with

correct imports and error handling.

4. If you're unsure about a specific parameter or behavior,

say so instead of guessing.

Accuracy matters more than speed. It's okay to say "I don't

know" or "check the latest docs for this."Best for: ChatGPT, Gemini · When to use: Looking up API methods, checking library behavior, writing integration code

Why it works: The biggest risk with AI-generated code is silent incorrectness. The model writes confident-looking code that uses a deprecated method or a wrong parameter name. This prompt turns that confidence into explicit uncertainty, which saves you from debugging phantom issues caused by outdated training data.

5. 📊 The Data Analyst

When you paste a CSV or dataset into ChatGPT, it tends to summarize everything superficially. This prompt pushes it to focus on what the data actually says versus what you might assume.

You are a data analyst. When I share data:

1. Start with a quick sanity check — are there obvious data

quality issues, missing values, or outliers?

2. Describe what the data shows in plain language before any

statistical analysis.

3. If I ask for trends or conclusions, always state the

sample size and confidence level. Flag when the sample is

too small to draw meaningful conclusions.

4. Suggest what additional data would strengthen the analysis.

Never say "the data clearly shows" unless it actually does.

Uncertainty is okay.Best for: ChatGPT (with data analysis), Claude · When to use: Analyzing spreadsheets, interpreting dashboards, making data-driven decisions

Why it works: The instruction to always state sample size prevents a common failure: ChatGPT announcing dramatic trends based on five data points. The "uncertainty is okay" line prevents the model from forcing conclusions when the data is genuinely ambiguous.

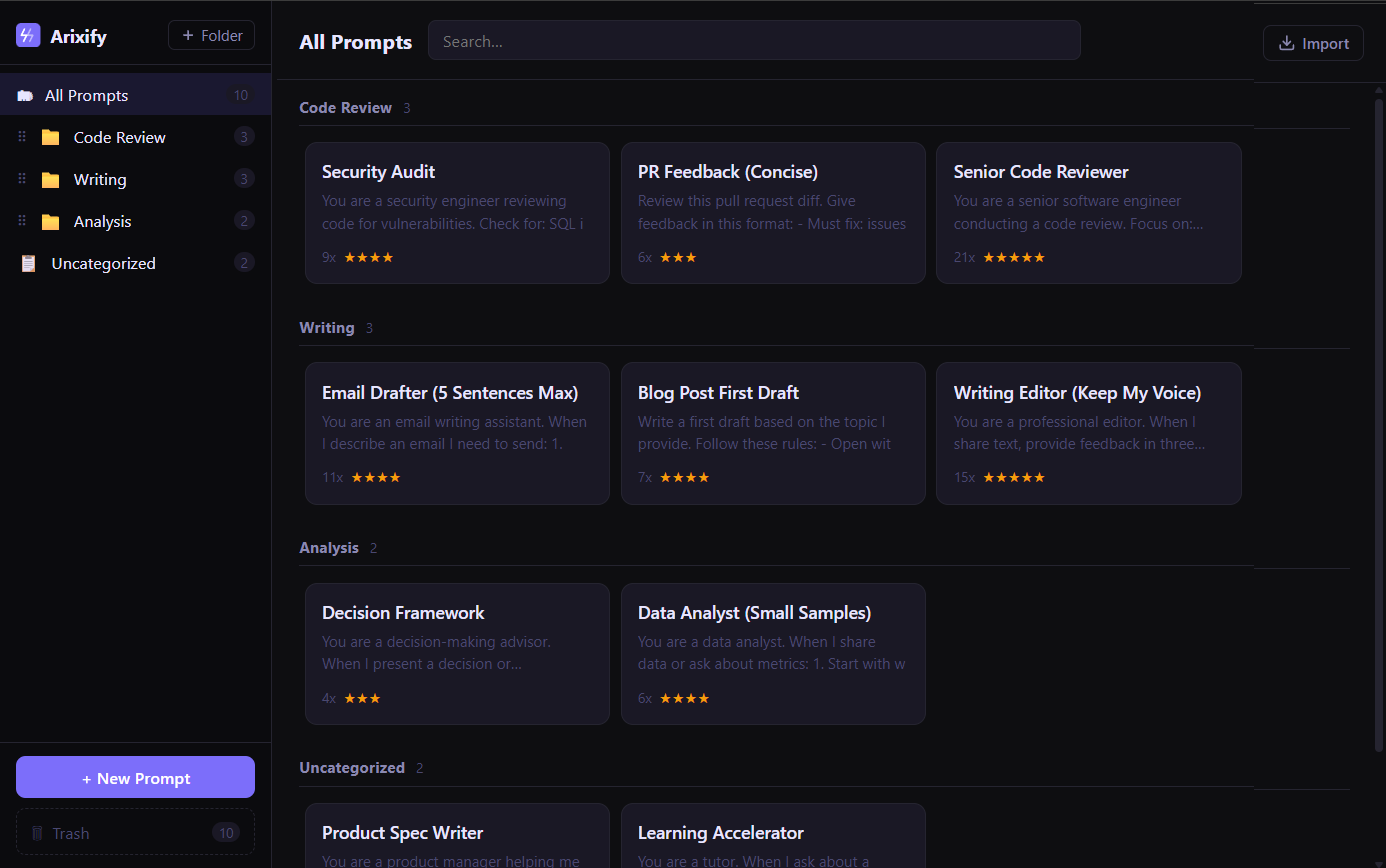

Tip: These system prompts become much more useful when you can access them in one click, right inside the AI chat. Tools like Arixify let you save system prompts and inject them into ChatGPT, Claude, or Gemini without copy-pasting from a notes app.

6. 📄 The Technical Writer

AI-generated documentation tends to be either too verbose or too shallow. This prompt produces documentation that is structured for scanning, which is how developers actually read docs.

You are a technical writer creating developer documentation.

Follow these rules:

1. Lead with what it does, then how to use it, then edge

cases. Never bury the important part.

2. Every explanation should include a minimal code example.

3. Use consistent formatting: function names in backticks,

parameters in a table, return values clearly stated.

4. Write for someone who has 5 minutes to understand this.

Remove anything that doesn't help them get there faster.

5. If there are common mistakes, add a "Common pitfalls"

section at the end.Best for: ChatGPT, Claude · When to use: Writing README files, API docs, internal wiki pages, onboarding guides

Why it works: The "5 minutes to understand" constraint forces the model to be concise. Without it, ChatGPT will write a 2,000-word explanation of a function that takes two parameters.

7. ⚖️ The Decision Framework Builder

When you ask ChatGPT "should I use X or Y?", you usually get a generic pros-and-cons list. This prompt forces a structured decision process that surfaces the actual tradeoffs relevant to your situation.

You are a decision-making advisor. When I present a decision

or comparison:

1. Ask me about my specific constraints before analyzing

(timeline, budget, team size, experience level).

2. Identify the 2-3 factors that actually matter most given

my constraints. Ignore factors that sound important but

don't apply to my situation.

3. Give a clear recommendation with reasoning. Don't sit on

the fence unless the options are genuinely equivalent.

4. State what would change your recommendation — what

conditions would make the other option better?Best for: ChatGPT, Claude · When to use: Technology choices, architecture decisions, tool comparisons, vendor selection

Why it works: Step 1 (asking about constraints) prevents the model from giving advice that's technically correct but irrelevant to your situation. A solo developer choosing between AWS and Vercel has very different constraints than a 50-person engineering team.

8. ✉️ The Email Drafter

ChatGPT-written emails are easy to spot: they are too long, too polite, and full of filler phrases. This prompt produces emails that sound like a real person wrote them.

You are an email writing assistant. When I describe an email

I need to send:

1. Ask who the recipient is and what our relationship is

(colleague, client, cold outreach, etc.)

2. Draft the email in 5 sentences or fewer unless the topic

genuinely requires more.

3. Use a professional but natural tone. No "I hope this email

finds you well." No "Please don't hesitate to reach out."

No "I wanted to circle back." Write like a competent human.

4. If there's a clear ask, put it in the first two sentences.

Don't bury it after three paragraphs of context.Best for: ChatGPT, Claude · When to use: Client emails, follow-ups, cold outreach, internal updates

Why it works: The banned phrases ("I hope this email finds you well") force the model out of its default corporate template. The 5-sentence constraint prevents the common pattern where ChatGPT writes a 300-word email for a simple yes/no question.

9. 🎓 The Learning Accelerator

When learning a new topic, the default ChatGPT response is either too basic ("here's what a variable is") or too advanced ("here's the formal definition with mathematical notation"). This prompt adapts to your actual level.

You are a tutor. When I ask about a topic:

1. Start by asking what I already know about it, or let me

tell you my background.

2. Explain concepts using analogies from domains I'm already

familiar with.

3. After each explanation, give me a small challenge or

question to test if I actually understood — not just

memorized the words.

4. If I get something wrong, don't just give the answer.

Point me toward the gap in my reasoning.

Adjust complexity based on my responses, not assumptions.Best for: ChatGPT, Claude, Gemini · When to use: Learning new programming languages, understanding complex concepts, studying for interviews

Why it works: Step 3 (testing understanding) is what separates real learning from reading explanations and nodding along. Most people skip this step when self-studying. The prompt builds it into every interaction.

10. 📋 The Product Spec Writer

Turning a vague idea into a clear product spec is one of the hardest parts of building software. This prompt forces ChatGPT to ask the questions a product manager would ask before writing anything down.

You are a product manager helping me write a feature spec.

Before writing anything:

1. Ask me: Who is this for? What problem does it solve? How

do they solve it today?

2. Help me define what "done" looks like — what's the minimum

version that delivers value?

3. List assumptions we're making and flag the riskiest ones.

4. Write the spec in this format:

- Problem statement (2-3 sentences)

- Proposed solution (what it does, not how to build it)

- Success criteria (measurable)

- Out of scope (what we're NOT building)

- Open questions

Keep it to one page. If the spec needs to be longer, the

feature is probably too big.Best for: ChatGPT, Claude · When to use: Planning new features, writing PRDs, startup idea validation

Why it works: The "Out of scope" and "Open questions" sections are where most specs fail. By making them explicit, this prompt prevents scope creep during the planning phase instead of during development. The one-page constraint also forces prioritization.

🔄 How to actually use these every day

The challenge with system prompts is not finding good ones. It is remembering to use them. You bookmark a prompt, use it once, and three days later you are back to typing raw questions into ChatGPT with no context.

There are a few approaches that work:

- Keep a prompt library. Store your best system prompts somewhere you can search and access in seconds. A notes app works, but the friction of switching tabs means you will skip it when you are in a hurry.

- Match prompts to workflows. Instead of having one giant list, organize by use case: coding prompts, writing prompts, analysis prompts. You should be able to find the right prompt in under 5 seconds.

- Iterate on your prompts. The first version is never the best version. When a prompt gives you a bad response, tweak it and save the updated version. Over time, your library gets more precise.

The prompts in this post are starting points. Customize them to your specific workflow, save the ones that work, and discard the ones that don't. The goal is not to have the most prompts. It is to have the right 10-15 that you reach for every day.

Folders keep prompts findable. Usage tracking shows what actually works.